Almost Timely News: Where Generative AI and Language Models are Probably Going in 2024

2023-12-10

Almost Timely News: Where Generative AI and Language Models are Probably Going in 2024 (2023-12-10) :: View in Browser

Content Authenticity Statement

100% of this newsletter's content was generated by me, the human. When I use AI, I'll disclose it prominently. Learn why this kind of disclosure is important.

Watch This Newsletter On YouTube 📺

Click here for the video 📺 version of this newsletter on YouTube »

Click here for an MP3 audio 🎧 only version »

What's On My Mind: Where Generative AI and Language Models are Probably Going in 2024

As it's heading towards the end of the year and a lot of people are starting to publish their end of year lists and predictions, let's think through where things are right now with generative AI and where things are probably going.

I wrote yesterday on LinkedIn a bit about adversarial models, and I figured it's worth expanding on that here, along with a few other key points. We're going to start off with a bit of amateur - and I emphasize amateur as I have absolutely no formal training - neuroscience, because it hints at what's next with language models and generative AI.

Our brain isn't just one brain. We know even from basic grade school biology that our brain is composed of multiple pieces - the cerebrum, the cerebellum, the brain stem, etc. And within those major regions of the brain, you have subdivisions - the occipital lobe, the parietal lobe, and so on. Each of these regions performs specific tasks - vision, language, sensory data, etc. and those regions are specialized. That's why traumatic brain injury can be so debilitating, because the brain isn't just one monolithic environment. It's really a huge cluster of small regions that all perform specific tasks.

If you look at the brain and recognize that it is really like 15 brains working together in a big network, you start to appreciate how complex it is and how much we take for granted. Just the simple act of opening this email or video and consuming it requires motor skills, language skills, vision skills, auditory skills, and high level thinking and processing. It's millions, maybe billions of computations per second just to consume a piece of content.

Why do we care about this? Because this perspective - of a massive network of computer models all integrated together - is where generative AI is probably going and more important, where it needs to go if we want AI to reach full power.

In the first half-decade of generative AI - because this all began in earnest in 2017 with Google's release of the transformers model - we focused on bigger and better models. Each generation of language model got bigger and more complex - more parameters, more weights, more tokens, etc. This model has 175 billion parameters, that model was trained on 1 trillion tokens. Bigger, bigger, bigger. And this worked, to a degree. Andrej Karpathy of OpenAI recently said in a talk that there doesn't appear to be any inherent limit to the transformers architecture except compute power - bigger means better.

Except bigger means more compute power, and that's not insignificant. When the consumer of generative AI uses ChatGPT to generate some text or DALL-E to make an image, what happens behind the scenes is hidden away, as it should be. Systems generally shouldn't be so complex and unfriendly that people don't want to use them. But to give you a sense of what's REALLY happening behind the scenes, let me briefly explain what happens. This is kind of like going behind the lanes at a bowling alley and looking at how absurdly complex the pin-setting machine is.

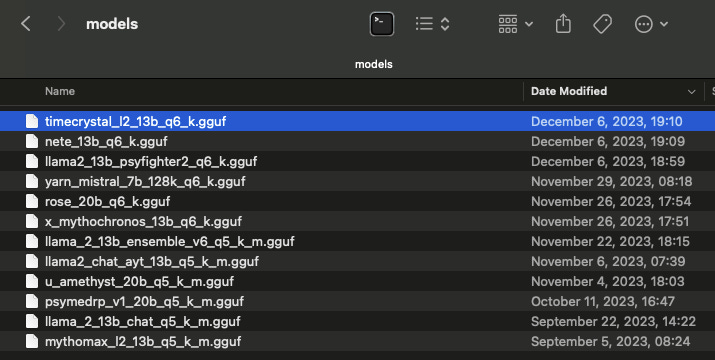

First, you need to have a model itself. The model is usually just a really big file. For open source generative AI, I keep models on an external hard drive because they're really big files.

Next, you need a model loader to load the model and provide some kind of interface for it. The two interfaces I use for open source models are LM Studio for general operations and KoboldCPP for creative writing. You then load the model on your laptop and configure its settings. Again, for a consumer interface like ChatGPT, you never see this part. But if you're building and deploying your own AI inside your company, this part is really important.

You'll set up things like how much memory it should use, what kind of computer you have, how big the model's environment should be, how much working memory it should have, and how it should be made available to you:

And then once it's running, you can start talking to it. When you open a browser window to ChatGPT, all this has happened behind the scenes.

Behind the scenes, as you interact with the model, you can see all the different pieces beginning to operate - how it parses our prompt, how it generates the output one fragment of a word at a time, how much of the working memory has been used up, and how many of these things occur:

Watching these systems do their thing behind the scenes makes it abundantly clear that they are not self-aware, not sentient, have no actual reasoning skills, and are little more than word prediction machines. Which means that a lot of the characteristics we ascribe to them, they don't actually have.

Bigger models take more resources to run, and at the end of the day, even the biggest, most sophisticated model is still nothing more than a word prediction machine. It's very good at what it does, but that is literally all it does.

Which means if we have tasks that aren't word and language-based tasks, language models aren't going to necessarily be good at them. What we need to be thinking about is what are known as agent networks.

An agent network is an ecosystem of AI and non-AI components, all meshed together to create an app that's greater than the sum of its parts. It has a language model to interface with us. It has databases, web browsers, custom code, APIs... everything that a language model might need to accomplish a task. If we think of the language model as the waiter interfacing with us, the agent network is the back of house - the entire kitchen and everyone and everything that does all the cooking.

Just as a waiter rarely, if ever, goes to the line and cooks, a language model should not be going to the back of house to do operations that are not language. Except when we think about tools like ChatGPT, that's exactly what we expect of them - and why we get so disappointed when they don't do as we ask. We assume they're the entire restaurant and they're really just front of house.

So what does this have to do with the future of generative AI? Well, let's put a couple of things together. Bigger models are better but more costly. Recent research from companies like Mistral have demonstrated that you can make highly capable smaller models that, with some tuning, can perform as good or better than big models for the same task, but at a fraction of the cost.

For example, much has been made of the factoid that's been floating around recently that generating an image with AI uses the same amount of power as charging your phone. This was cited from a piece by Melissa Heikkila in the MIT Technology Review from a study that has not been peer-reviewed yet. Is that true? It really depends. But it is absolutely true that the bigger the model, the more power it consumes and the slower it is (or the more powerful your hardware has to be to run it).

If you can run smaller models, you consume less power and get faster results. But a smaller model tends to generate less good quality results. And that's where an agent network comes in. Rather than having one model try to be everything, an agent network has an ensemble of models doing somewhat specialized tasks.

For example, in the process of writing a publication, we humans have writers, editors, and publishers. A writer can be an editor, and an editor can be a publisher, but often people will stick to a role that they're best at. AI models are no different in an agent network. One model generates output, another model critiques it, and an third model supervises the entire process to ensure that the system is generating the desired outputs and following the plan.

This, by the way, is how we make AI safe to use in public. There is no way under the current architecture of AI models to make a model that is fully resistant to being compromised. It's simply not how the transformers architecture and human language work. You can, for example, tell someone not to use racial slurs, but that doesn't stop someone from behaving in a racist manner, it just restricts the most obvious vocabulary. Just as humans use language in an infinite number of ways, so too can language models be manipulated in unpredictable ways.

Now, what is an agent network starting to sound an awful lot like? Yep, you guessed it: the human brain. Disabusing ourselves of the notion of one big model to rule them all, if we change how we think about AI to mirror the way our own brains work, chances are we'll be able to accomplish more and consume fewer resources along the way. Our brain has dozens of regions with individual specializations, individual models if you will. Networked together, they create us, the human being. Our AI systems are likely to follow suit, networking together different models in a system that becomes greater than the individual parts.

Business is no different, right? When you're just starting out, it's you, the solo practitioner. You do it all, from product to service to accounting to legal to sales. You're a one person show. But as time goes on and you become more successful, your business evolves. Maybe you have a salesperson now. Maybe you have a bookkeeper and a lawyer. Your business evolves into an agent network, a set of entities - people, in the case of humans - who specialize at one type of work and interface with each other using language to accomplish more collectively than any one person could do on their own.

This is the way generative AI needs to evolve, and the way that much of the movement is beginning to. While big companies like OpenAI, Meta, and Google tout their latest and greatest big models, an enormous amount is happening with smaller models to make AI systems that are incredibly capable, and companies & individuals who want to truly unlock the full power of AI will embrace this approach.

It's also how you should be thinking about your personal use of AI, even if you never leave an interface like ChatGPT. Instead of trying to do everything all at once in one gigantic prompt, start thinking about specialization in your use of AI. Even something as simple as your prompt library should have specializations. Some prompts are writing prompts, others are editing prompts, and still others are sensitivity reader prompts, as an example. You pull out the right prompts as needed to accomplish more than you could with a single, monolithic "master prompt". If you're a more advanced user, think about the use of Custom GPTs. Instead of one big Content Creation GPT, maybe you have a Writer GPT, an Editor GPT, a critic GPT, etc. and you have an established process for taking your idea through each specialized model.

As we roll into the new year, think of AI not as "the best tool for X", but what ensemble, what toolkit has the pieces you need to accomplish what you want. You'll be more successful, faster, than people looking for the One Model to Rule Them All.

Also, I'm going to take a moment to remind you that my new course, Generative AI for Marketers, goes live on December 13. If you register before the 13th with discount code EARLYBIRD300, you save $300 - a whopping 38% - off the price once the course goes live. The first lesson is free, so go sign up to see what's inside the course and decide whether it's right for you or not, but I will say of all the courses I've put together, this is my favorite yet by a long shot.

How Was This Issue?

Rate this week's newsletter issue with a single click. Your feedback over time helps me figure out what content to create for you.

Share With a Friend or Colleague

If you enjoy this newsletter and want to share it with a friend/colleague, please do. Send this URL to your friend/colleague:

https://www.christopherspenn.com/newsletter

For enrolled subscribers on Substack, there are referral rewards if you refer 100, 200, or 300 other readers. Visit the Leaderboard here.

ICYMI: In Case You Missed it

Besides the new Generative AI for Marketers course I'm relentlessly flogging, I recommend

You Ask, I Answer: Is the Generative AI Space Ripe for Consolidation?

You Ask, I Answer: Future of Retrieval Augmented Generation AI?

You Ask, I Answer: Answering the Same Generative AI Questions?

Almost Timely News, December 3, 2023: AI Content Is Preferred Over Human Content

You Ask, I Answer: Open Weights, Open Source, and Custom GPT Models?

Mind Readings: The Dangers of Excluding Your Content From AI

12 Days of Data

As is tradition every year, I start publishing the 12 Days of Data, looking at the data that made the year. Here's the first 5:

Skill Up With Classes

These are just a few of the classes I have available over at the Trust Insights website that you can take.

Premium

Free

⭐️ The Marketing Singularity: How Generative AI Means the End of Marketing As We Knew It

Powering Up Your LinkedIn Profile (For Job Hunters) 2023 Edition

Empower Your Marketing With Private Social Media Communities

Paradise by the Analytics Dashboard Light: How to Create Impactful Dashboards and Reports

Advertisement: Generative AI Workshops & Courses

Imagine a world where your marketing strategies are supercharged by the most cutting-edge technology available – Generative AI. Generative AI has the potential to save you incredible amounts of time and money, and you have the opportunity to be at the forefront. Get up to speed on using generative AI in your business in a thoughtful way with Trust Insights' new offering, Generative AI for Marketers, which comes in two flavors, workshops and a course.

Workshops: Offer the Generative AI for Marketers half and full day workshops at your company. These hands-on sessions are packed with exercises, resources and practical tips that you can implement immediately.

👉 Click/tap here to book a workshop

Course: We’ve turned our most popular full-day workshop into a self-paced course. The Generative AI for Marketers online course launches on December 13, 2023. You can reserve your spot and save $300 right now with your special early-bird discount! Use code: EARLYBIRD300. Your code expires on December 13, 2023.

👉 Click/tap here to pre-register for the course

Get Back to Work

Folks who post jobs in the free Analytics for Marketers Slack community may have those jobs shared here, too. If you're looking for work, check out these recent open positions, and check out the Slack group for the comprehensive list.

What I'm Reading: Your Stuff

Let's look at the most interesting content from around the web on topics you care about, some of which you might have even written.

Social Media Marketing

Future of Social Media Predictions for 2024 via Sprout Social

TikTok from Creator Fund to Creativity Program via Sprout Social

Media and Content

SEO, Google, and Paid Media

Advertisement: Business Cameos

If you're familiar with the Cameo system - where people hire well-known folks for short video clips - then you'll totally get Thinkers One. Created by my friend Mitch Joel, Thinkers One lets you connect with the biggest thinkers for short videos on topics you care about. I've got a whole slew of Thinkers One Cameo-style topics for video clips you can use at internal company meetings, events, or even just for yourself. Want me to tell your boss that you need to be paying attention to generative AI right now?

📺 Pop on by my Thinkers One page today and grab a video now.

Tools, Machine Learning, and AI

Announcing Purple Llama: Towards open trust and safety in the new world of generative AI

Google's Gemini AI launch marred by questions over capabilities via VentureBeat

Analytics, Stats, and Data Science

The Ultimate Guide to Power BI Visualizations via Analytics Vidhya

8 GitHub Alternatives for Data Science Projects via Analytics Vidhya

All Things IBM

Six ways AI can influence the future of customer service via IBM Blog

IBM Is Planning to Build Its First Fault-Tolerant Quantum Computer by 2029

Dealer's Choice : Random Stuff

How to Stay in Touch

Let's make sure we're connected in the places it suits you best. Here's where you can find different content:

My blog - daily videos, blog posts, and podcast episodes

My YouTube channel - daily videos, conference talks, and all things video

My company, Trust Insights - marketing analytics help

My podcast, Marketing over Coffee - weekly episodes of what's worth noting in marketing

My second podcast, In-Ear Insights - the Trust Insights weekly podcast focused on data and analytics

On Threads - random personal stuff and chaos

On LinkedIn - daily videos and news

On Instagram - personal photos and travels

My free Slack discussion forum, Analytics for Marketers - open conversations about marketing and analytics

Advertisement: Ukraine 🇺🇦 Humanitarian Fund

The war to free Ukraine continues. If you'd like to support humanitarian efforts in Ukraine, the Ukrainian government has set up a special portal, United24, to help make contributing easy. The effort to free Ukraine from Russia's illegal invasion needs our ongoing support.

👉 Donate today to the Ukraine Humanitarian Relief Fund »

Events I'll Be At

Here's where I'm speaking and attending. Say hi if you're at an event also:

Social Media Marketing World, San Diego, February 2024

MarketingProfs AI Webinar, Online, March 2024

Australian Food and Grocery Council, Melbourne, May 2024

MAICON, Cleveland, September 2024

Events marked with a physical location may become virtual if conditions and safety warrant it.

If you're an event organizer, let me help your event shine. Visit my speaking page for more details.

Can't be at an event? Stop by my private Slack group instead, Analytics for Marketers.

Required Disclosures

Events with links have purchased sponsorships in this newsletter and as a result, I receive direct financial compensation for promoting them.

Advertisements in this newsletter have paid to be promoted, and as a result, I receive direct financial compensation for promoting them.

My company, Trust Insights, maintains business partnerships with companies including, but not limited to, IBM, Cisco Systems, Amazon, Talkwalker, MarketingProfs, MarketMuse, Agorapulse, Hubspot, Informa, Demandbase, The Marketing AI Institute, and others. While links shared from partners are not explicit endorsements, nor do they directly financially benefit Trust Insights, a commercial relationship exists for which Trust Insights may receive indirect financial benefit, and thus I may receive indirect financial benefit from them as well.

Thank You

Thanks for subscribing and reading this far. I appreciate it. As always, thank you for your support, your attention, and your kindness.

See you next week,

Christopher S. Penn

A great breakdown of where generative AI is heading! The pace of innovation in this space is incredible, and it’s exciting to see how it continues to evolve. Appreciate the insights!